What Happens After Coding Is Solved: Long Running Agents

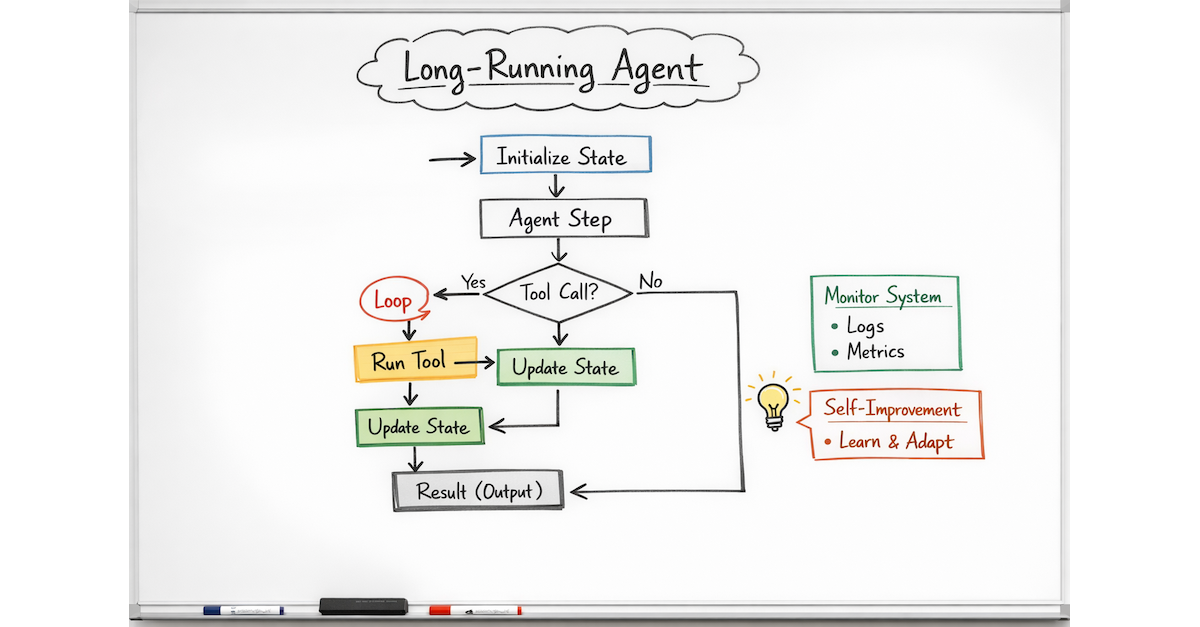

I've been working on a number of long running agents for fraud detection, data collection, and marketing research. To me, it makes more sense to call them continuously running agents, as the job never stops. Boris Cherny did have a few interesting things to say about what is going on in Claude Code. The popular choice is to call them long running agents in “What Happens After Coding Is Solved” .

The project has a number of goals.

- Node hot swapping - There is a need to swap out nodes without requiring a restart of the entire system.

- The right tool for the job - Use vLLM with a custom model, SGlang, LangGraph as needed.

- GPU Operation cost minimization - Keep GPU use to a minimum by using AWS EC2 spot instances (vLLM + ML models) and AWS Bedrock batch inference.

- Optimization and observability - The ability to have fine grained control over prompts and models to allow for optimization, debugging, and observability.

- Self optimization utilizing a coding agent to create customized transformers and retrievers.

The cherry on top goal of self optimization is a differentiator from traditional systems, which is what happens when coding is a solved problem. I want to add a node that creates new nodes when it recognizes a pattern. For example, some data sources have an API, and a plugin can be easily coded with tools like Codex, Claude, or other coding LLMs. After completing a few, the common instructions and problems become a pattern, and it's clear that it can be automated. It's also clear that many parts of the system can auto code new nodes to handle specific cases in a more optimized way.

These goals had an interesting side effect:

- The development of the agent as a whole doesn't look like the typical chat UI, which displays the model's "thinking." It's closer to a data pipeline that is self learning and adapting.

- The delta of the increasing intelligence appears higher with this type of system vs. a traditional ML system that is trained.

- Having it work as a batch system creates a nice, smooth 98-100% GPU usage graph, and it can scale horizontally as needed, making resource allocation predictable.

- Having the system work as an orchestrator of agents makes subdividing the effort into individual components that can be managed by different teams with different tools possible. You don't get vendor locked or siloed into one set of tools.

- It also leads me to see that the development of systems which improve themselves will be greatly advanced. Traditional machine learning has been great for this field, and this will continue to curve upward.

If you're working on a project like this, I'm looking for a new position. I'm a senior developer with a full stack, product manager background.

My view on what happens after coding is solved is that systems will become more flexible. The current development system is akin to print material before the internet. Companies attempted to make exact copies of their magazines online, but over time the formats we see now were developed. New systems will be much more dynamic, with the ability to self optimize and add features for specific edge cases for users with limited or no involvement from a development team other than providing guidelines on how to add a new feature. This is already happening in a small way as LLMs generate a plan and then call APIs or MCP servers.

If you're working on a project like this, I'd like to connect. I'm looking for a new position developing agents leveraging LLMs and ML. I'm a senior developer with full stack development and product manager experience.