Reducing friction when migrating vendors by utilizing LLMs

I've been thinking about vendor lock-in lately as I've been switching between various service options; one thing that has become clear is that the migration effort is not as large a factor as it once was. LLMs can assist with research, solution creation, and applying it across the codebase. The ramp up to start using an LLM to assist in migration is low with many IDEs available with assistants. For more complex needs, there are services like the Context7 model context server, or creating an in-house Retrieval-Augmented Generation (RAG) service can be added to the workflow.

This has business impacts for both vendors and customers. For vendors, this results in reduced lock-in protection but also the ability to take on new customers with less effort. This is all assuming their service is well documented. Having good documentation has always been a good way to allow customers to adopt a service without needing to provide consultation services for every customer. However, for many vendors, integration services are the core business, as the service is a complex and prolonged task. I don't see this model disappearing; if anything, more complex integration will be possible with higher reliability, as much of the workflow can be automated.

For customers, this reduces the risk of working with a new service, as you can either reduce upfront integration costs or reallocate more effort to testing. Some tips to make a migration easier:

- Architect the codebase to be modular when possible. This helps reduce the context window size needed for the LLM and improves accuracy.

- Utilize automated testing aggressively; this will enable an LLM to retry until it gets it right in the same way a human would.

- If ready made documentation is not available, then creating it goes a long way to cut down on the LLM from wandering. Ideally, having sample transformations from one version to another available to the LLM. LLMs are much more effective at applying the same pattern over and over across a codebase than developing the original solution.

- Provide instructions to focus on one area only, to prevent the LLM from making sweeping changes that are hard to review.

- Watch out for logic changes; LLMs like to "fix" things. Sometimes, code is slightly different than what an LLM expects; it will want to keep attempting to alter your code to what it expects.

Conclusion

The end result is the weak links in the chain of information, which is a mix of how much effort the LLM should put in and the ability of Context7 search to surface the correct context. I believe that LLMs with tools will be able to solve this in a much smoother way in the near future. There are some custom solutions people use that are solving pieces of it, but I've yet to see a widely available, easy-to-setup complete solution. Currently, developers will still need to read the documentation carefully to develop that context-specific solution, but application across the codebase where there is a repeat pattern has worked well.

My next steps are to check out alternatives—Tavily, GoCodeo—and see if Google search can be added to the workflow with something like Playwright MCP.

Examples

Migration example

Now on to a real-world example, where dreams of slick, effortless integrations come to an end. In each phase—research, solution creation, and application to the entire codebase—there are pros and cons. For the last stage of applying across a codebase, the models shined once a solid pattern was well defined and the task was to repeat an existing change. Research + solution creation has mixed results.

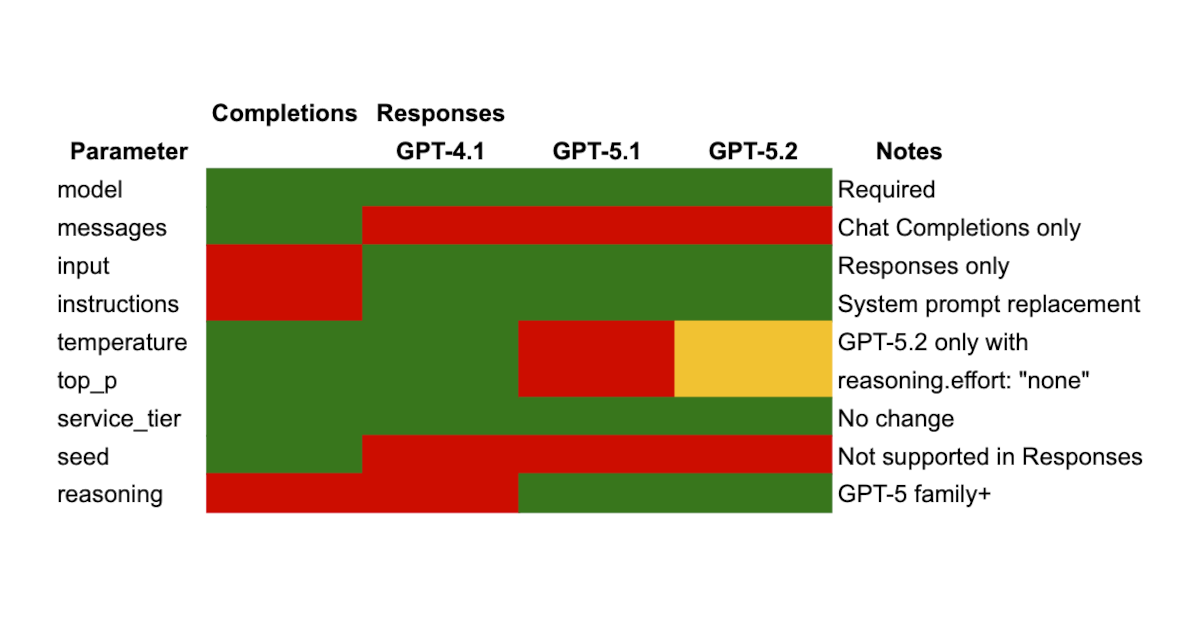

The example is moving from OpenAI completions to responses api endpoint. This captures where both developers and LLMs can get caught up in some minor details. There are three information cases

- Features were removed

- Features were still available but under certain conditions not completely documented in the endpoint but in a separate documentation

- Features were unchanged; documentation LLM was confused due to selecting wrong documentation

Claude Haiku 4.5

First I used Claude Haiku 4.5 generate two examples, its cutoff date is Feb 1, 2025 meaning that the OpenAI responses API was not included, as it was released in March 2025. Without additional documentation from Context7, its responses example is a quite oddly generated beta API endpoint, which is to be expected given the cutoff date.

With Context7, it needed additional guidance around which documentation set to use. The OpenAI platform docs do help with this, but the OpenAI Python documentation doesn't contain the full parameter list.

After two samples were generated, I took an existing chat completions code sample I had that utilized the service tier, seed, and temperature fields. Service tier is unchanged; the seed field has been removed. The temperature field is more nuanced; it exists but is not supported for GPT-5 models and defaults to 1. Its recommended replacement is nucleus sampling, top_p. This looks kind of like a cosine similarity optimization, but that's another discussion for another time.

For service tier, since the Context7 documentation selected by the LLM would default to the Python documentation—which is a trimmed version focused on Python—the LLM would assume the parameter was not supported. Nudging it to use the OpenAI platform allows the documentation to be found. This clunky part highlights that a much better search is needed than what Context7 currently provides.

The temperature field gets passed over as not a problem, as it's present in the field list, but the model guidance specifies the cases where it is supported and not supported. This comes back to the research part needing to go much deeper in detail, past just the new API endpoint documentation, to get full context.

For seed, it's removed completely, and it makes the correct recommendation to either stick with chat completions or drop the field.

Claude Opus 4.5

Without Context7, Claude Opus 4.5 was able to generate somewhat of a sample for chat completions and chat responses endpoints. It included the seed parameter and the temperature parameter. It removed the seed parameter after being asked to check each parameter.

With Context7, it decides to remove the service tier parameter, as the chosen documentation doesn't have it. However, it does search a little deeper for more context than the Haiku model. Temperature is kept; service tier and seed are removed.

With Context7 and prompting it to use the OpenAI platform documentation, it keeps the temperature and service tier. It removes seed. But it doesn't go deep enough to see what to do with the temperature field and top_p. However, it would have seen the recommendation, as it lists all the parameters in a chart. The top_p documentation in the platform documentation does recommend using it as a replacement for temperature.

With leading questions, it starts to get into the details around how to handle the temperature field migration. This moves into the territory of too much hand-holding and losing out on the benefits of an LLM.

Claude Haiku 4.5

Example 1: Chat Completions with one_shot function

from openai import OpenAI

def one_shot(messages: list[dict], instructions: str, model: str = "gpt-4o") -> str:

"""Generate a single response from chat completions."""

client = OpenAI(api_key="your-api-key")

# Prepend system message to messages array

chat_messages = [{"role": "system", "content": instructions}] + messages

response = client.chat.completions.create(

model=model,

messages=chat_messages,

temperature=0.7,

max_tokens=150

)

return response.choices[0].message.content

# Usage - messages contain only conversation, instructions are separate

messages = [

{"role": "user", "content": "What is quantum computing?"},

{"role": "assistant", "content": "Quantum computing uses quantum bits..."},

{"role": "user", "content": "How does it differ from classical computing?"}

]

instructions = "You are a helpful assistant."

result = one_shot(messages, instructions)

print(result)

Example 2: Responses API with one_shot function (multi-turn support)

from openai import OpenAI

def one_shot(messages: list[dict], instructions: str, model: str = "gpt-4o") -> str:

"""Generate a single response from the Responses API, handling multi-turn conversations."""

client = OpenAI(api_key="your-api-key")

response = client.responses.create(

model=model,

instructions=instructions,

messages=messages

)

return response.output_text

# Usage - messages contain only conversation, instructions are separate

messages = [

{"role": "user", "content": "What is quantum computing?"},

{"role": "assistant", "content": "Quantum computing uses quantum bits..."},

{"role": "user", "content": "How does it differ from classical computing?"}

]

instructions = "You are a helpful assistant."

result = one_shot(messages, instructions)

print(result)

Code to be migrated

client = llm_client.client

if "gpt5" in llm_client.model:

raise ValueError("gpt5 model not supported for summarize_response")

response = await client.chat.completions.create(

model=llm_client.model,

messages=messages,

temperature=llm_client.temperature,

service_tier=llm_client.service_tier,

seed=llm_client.seed,

)

await client.close()

Solution proposed by LLM

client = llm_client.client

# Extract system instructions from messages

system_message = next(

(msg["content"] for msg in messages if msg["role"] == "system"),

"You are a helpful assistant."

)

# Filter out system messages for the Responses API

conversation_messages = [msg for msg in messages if msg["role"] != "system"]

response = await client.responses.create(

model=llm_client.model,

instructions=system_message,

messages=conversation_messages,

temperature=llm_client.temperature,

service_tier=llm_client.service_tier,

)

await client.close()

Claude Opus 4.5 without Context7, cut off date May 2025

client = llm_client.client

# Extract system instructions from messages

system_instruction = None

input_messages = []

for msg in messages:

if msg["role"] == "system":

system_instruction = msg["content"]

else:

input_messages.append(msg)

response = await client.responses.create(

model=llm_client.model,

instructions=system_instruction,

input=input_messages,

temperature=llm_client.temperature,

service_tier=llm_client.service_tier,

seed=llm_client.seed,

)

result = response.output_text

await client.close()

Claude Opus 4.5 with Context7 + prompting to use open ai platform docs

client = llm_client.client

# Extract system instructions from messages

system_message = next(

(msg["content"] for msg in messages if msg["role"] == "system"),

None

)

# Filter out system messages for the Responses API

conversation_messages = [msg for msg in messages if msg["role"] != "system"]

response = await client.responses.create(

model=llm_client.model,

instructions=system_message,

input=conversation_messages, # Note: use 'input' not 'messages'

temperature=llm_client.temperature,

service_tier=llm_client.service_tier,

# seed is NOT supported in Responses API - removed

)

result = response.output_text

await client.close()

Claude Opus 4.5 with Context7 + prompting

With leading questions prompting to use open ai platform docs and focus on temperature, it starts to come up with a work around the specifics for different models but doesn't figure out GPT 5.2.

client = llm_client.client

# Extract system instructions from messages

system_message = next(

(msg["content"] for msg in messages if msg["role"] == "system"),

None

)

# Filter out system messages for the Responses API

conversation_messages = [msg for msg in messages if msg["role"] != "system"]

# Build request params based on model type

request_params = {

"model": llm_client.model,

"instructions": system_message,

"input": conversation_messages,

"service_tier": llm_client.service_tier,

}

# Temperature/top_p only supported for gpt-5.1/5.2 with reasoning=none

# For older gpt-5/gpt-5-mini/gpt-5-nano, these will raise an error

if "gpt-5.1" in llm_client.model or "gpt-5.2" in llm_client.model:

# Only include temperature if using no reasoning

request_params["reasoning"] = {"effort": "none"}

request_params["temperature"] = llm_client.temperature

elif "gpt-4" in llm_client.model:

# GPT-4 models support temperature normally

request_params["temperature"] = llm_client.temperature

# For gpt-5, gpt-5-mini, gpt-5-nano: don't include temperature

response = await client.responses.create(**request_params)

result = response.output_text

await client.close()